We wanted to make security footage easier to search and monitor. No more watching hours of empty video.

PROJECT OVERVIEW

THE PROBLEM

- Too Much Footage: Security teams can't watch everything at once.

- Hard to Search: Finding a specific event in hours of video is tedious.

- Review Fatigue: Important events get missed when attention wavers.

WHAT IT DOES

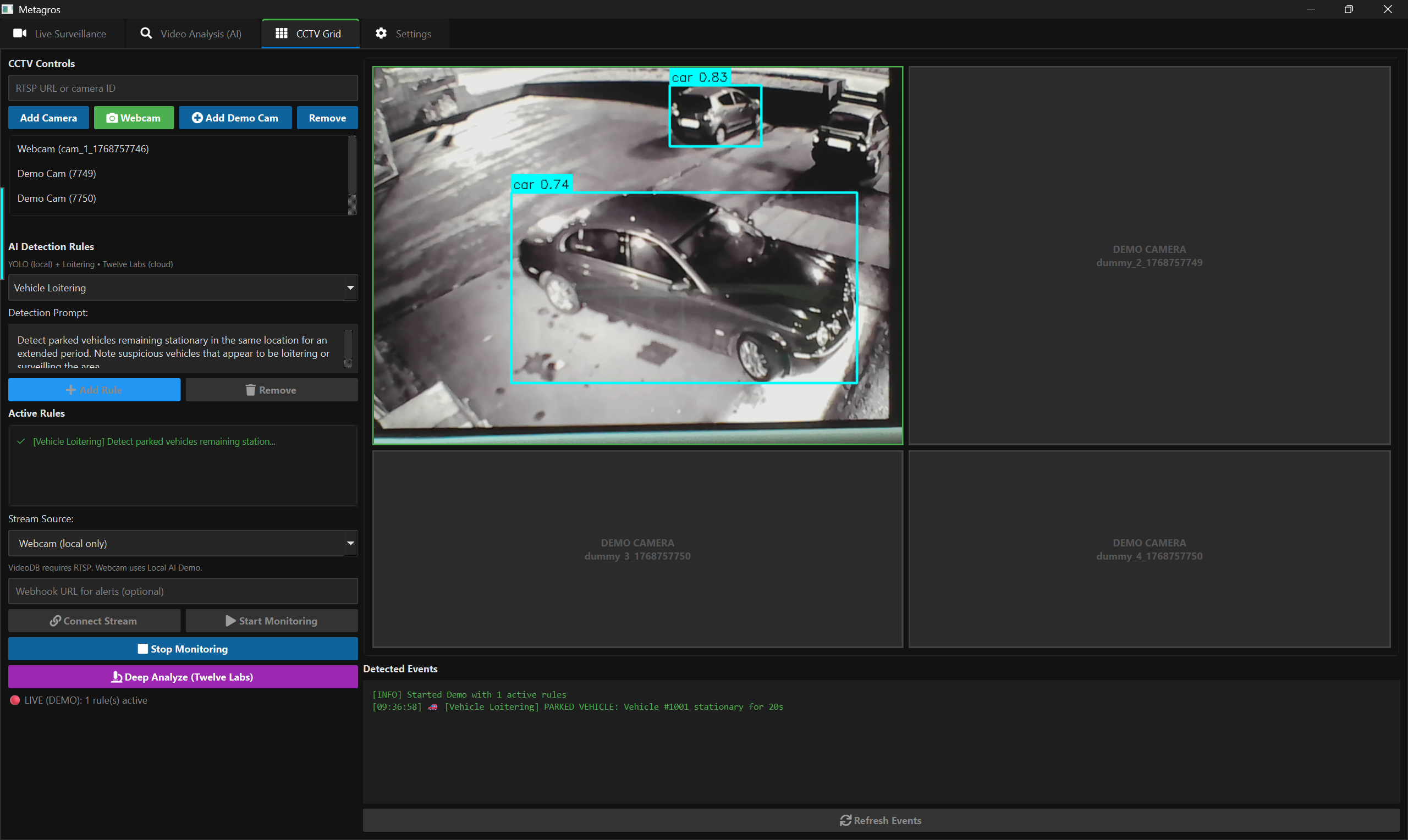

- Live Detection: Real-time object detection on live video using YOLO.

- Semantic Search: Allows natural language search over recorded footage.

- Effort Reduction: Reduces time needed to review footage.

HACKATHON GOALS

- Effective Monitoring: Helping teams focus on what matters.

- Less Overwhelm: Filtering out the noise of constant video feeds.

- Working Prototype: A functional desktop app built in 24 hours.

HOW IT'S BUILT

CORE

THE PROCESS

The Accomplishment: Successfully combined live detection with semantic video search, creating a unified interface for real-time and retrospective analysis.

The Timeframe: Built a working desktop prototype within the strict hackathon timeframe, from concept to execution.

The Challenge: Managed real-time performance across video streams while integrating heavy AI models (and survived Cursor/Antigravity crashing!).

WHAT'S NEXT

MULTI-CAMERA SUPPORT

Scaling the system to handle multiple simultaneous feeds for broader coverage.

REAL-WORLD TESTING

Deploying with real security camera feeds to validate performance in the field.

SECURE REMOTE ACCESS

Enabling encrypted remote monitoring for command centers.

WHAT WE LEARNED

HYBRID AI SYSTEMS

How to effectively combine traditional computer vision (OpenCV/YOLO) with modern VLM (Twelve Labs) models.

SYSTEM DESIGN

Designing a system that balances real-time performance, accuracy, and usability in a desktop environment.